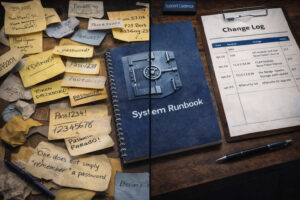

It’s 2 a.m. on a Tuesday when your phone lights up. Not a server crash. Not a CF security incident. Worse. Your senior ColdFusion developer – the one who’s been with you for eight years, the only person who really understands the payment processing system – just sent you a resignation email. Two weeks’ notice. […]

TeraTech ColdFusion Blog

CEOs: Why Your ColdFusion App Can’t Scale (It’s Not the Technology)

Your ColdFusion customer portal needs to launch in time for two new markets. The business needs it to be live in three months. The current estimate says nine. Your competitor shipped theirs last quarter. That gap gets noticed fast. It results in delayed revenue, slower market entry, and a wider gap between your plan and […]

CEO ColdFusion Modernization: The Growth Drag Hiding in Plain Sight

Do you know what your legacy ColdFusion app is costing you? I mean the hidden drag… The launch that slips. The feature that stalls. The plan that dies on the long road ahead because the app cannot keep up. That is when an old app goes from IT problem to growth problem. One company came […]

The ColdFusion Modernization Case a CIO Can Defend to the Board

Jason Meuter, Vice President of Software Engineering at Fidano, walked into a mess. The ColdFusion app had real security holes. The company also had a hard deadline. Hit SOC 2, or put major contracts worth millions at risk. It was a stressful time. Leadership had two roads ahead. One was a full rewrite in a […]

Still on ColdFusion 2016, 2018, or 2021? Why “Keeping the Lights On” Is No Longer Safe

If your organization is still running Adobe ColdFusion 2016, 2018, or the recently phased-out 2021, you are not alone. A lot of mission-critical ColdFusion Markup Language (CFML) applications continue to deliver value, and the business pressure to leave them untouched is real. That long-term stability remains one of ColdFusion’s biggest selling points. This year, though, […]

For CEOs, Legacy ColdFusion = M&A Valuation Risk

If you’re a CEO, the hidden cost of legacy ColdFusion can torpedo your company’s M&A valuation. And the only person looking like a hapless Hobbit will be you. Most boards dance around the subject of ColdFusion maintenance. First, it’s boring. Secondly, it’s abstract. Plus, it shouldn’t be an issue. Instead, they ask about stability, security […]

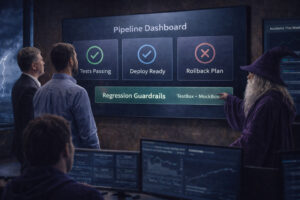

How Pro CIOs Turn ColdFusion Maintenance Chaos into Quieter Board Meetings

ColdFusion maintenance should not feel like sending the Fellowship into Mordor without a map. Yet plenty of chief information officers (CIOs) live that reality. A “simple” fix lands, three other things break, and the week disappears into incident response. That upgrade you keep postponing starts to feel less like a project and more like a […]

The hidden CEO cost of legacy CF security: breach risk, insurance premiums, and exit drag

Most CEOs focus on reputation, valuation, and growth. A legacy ColdFusion security posture shapes all three. Governance and lifecycle discipline drive the outcome. The upshot? Surprise costs, slower deals, and tense board questions. You can see the risk in premiums, diligence findings, and incident exposure. Breach risk has become brand risk A decade ago, a […]

CIOs: Is Your ColdFusion App Security Audit-Defensible?

If an external auditor arrived tomorrow, could you, as the CIO, explain your ColdFusion security posture clearly, with evidence and without scrambling? Boards and CEOs want documented controls. Cyber insurers raise the bar during underwriting. Regulators look for proof, not context. A single high-risk finding can turn into an awkward conversation with the CEO, fast. […]

From CF Crash Fire Fighting to Predictability: A CIO’s Guide to Stabilizing ColdFusion Systems

For many CIOs, ColdFusion incidents aren’t just technical problems. They’re 3am phone calls. Board meeting explanations. The reason you can’t take a vacation. They’re firefighting. They are high-stress moments that invite scrutiny, disrupt operations, and quietly erode confidence. Every incident raises the same questions: Why did this happen? Why now? And how do we prevent […]